Google I/O 2017 is Now in Full Swing: Here’s What You Need to Know

Google I/O 2017 is upon us, and as the Keynote seems to indicate, Google seems to have big plans for both Google Assistant, as well as its artificial intelligence systems. Without further ado, let’s dive right into the announcements that were made during the I/O Keynote.

Read Also: Google I/O 2017 – What to Expect

Google Assistant

2017 may turn out to be the year of Google Assistant for the company as it has announced that its own digital assistant will be extending its reach in a highly aggressive manner. For starters, iPhone users in the U.S. can now download and use Google Assistant from the App Store, giving them the option to choose between it and Siri.

That being said, Google has warned that due to API restrictions, the iOS version of Google Assistant will only be capable of doing rather basic tasks.

Aside from iOS, Google has also announced that Google Assistant will also be making its way into smart home appliances like fridges, light bulbs, washing machines, etc. Companies that will be launching Google Assistant-ready appliances includes LG, Sony, Panasonic, and many more.

Building upon the expansion of Google Assistant onto other devices, Google has also announced that it will be releasing an SDK for the assistant, allowing developers to add Assistant capabilities to their apps or devices if they choose to do so.

Expansion aside, Google has also added a slew of new improvements to the Assistant. For starters, the Google Assistant now comes with a keyboard with which you can ask it questions. Besides that, Google has also made it so that transactions can be performed entirely via Google Assistant.

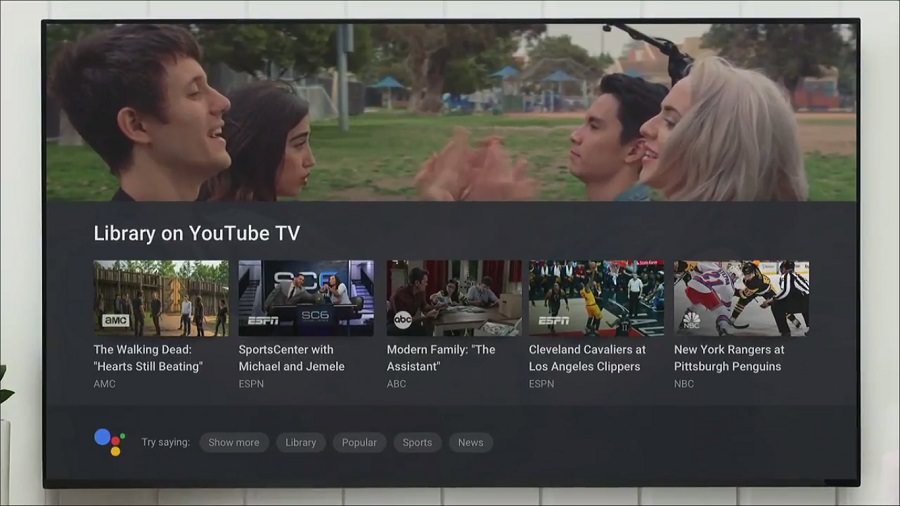

Google Home and Android TV

Google’s own smart speaker will be getting a huge feature upgrade within the year as the I/O Keynote reveals an array of new features that will be coming to Google Home.

Perhaps the biggest feature that will be coming to Google Home is what Google is referring to as Proactive Assistance. According to Google, this feature would allow Google Home to keep track of reminders, traffic alerts and flight statuses.

When a scheduled event draws near, the speaker itself will light up to inform the owner that an item is pending, after which the owner can ask the speaker "What’s up?" in order to receive updates from the speaker.

While the feature feels rather generic right now, the potential that Proactive Assistance has is something that can grow over time.

Phone calls will also be a thing you can do with the Google Home as the company announced that a new calling feature will be added to the speaker. Only available for outgoing calls at launch, the calls themselves are completely free as long as the numbers dialled are located in the U.S. or Canada.

The next two features for Google Home relate to Google’s own Android TV platform. Arriving in a future update, owners of both the Google Home and an Android TV will soon be able to ask Google Home to show information on their Android TV.

Some of the commands that were demonstrated at the Keynote includes "show my calendar on the TV" and "show me nearby restaurants on the TV".

Besides information, Google is also enabling you to be able to control your Android TV using Google Home via Cast. While you can’t exactly navigate entire app with Google Home, you can ask Google Home to perform actions like showing you what’s on your DVR.

Don’t have a Google Home? Don’t worry, as Android TV will be getting Google Assistant integration as well.

Finally, Google will also be adding new actions to Google Home, allowing the speaker to control additional services like HBO Now and Spotify Free. In addition to that, the smart speaker will finally be able to support Bluetooth audio.

Android O

Unfortunately, Google didn’t announce the new name for Android O at the I/O Keynote. Nevertheless, the company did reveal more details as to what it’s hoping to achieve with Android O, and the answer is Vitals and Fluid experiences.

For the Vitals side of things, Google is looking to improve upon the battery life, stability, startup time and security of Android with Android O. For the battery life side of things, Android O will come with something called "wise limits" that is applied to background location and app activities.

With it, the OS will be able to monitor and regulate its energy consumption, preventing it from draining more energy from the phone than needed.

With startup time, Google claims that Android O would be able to boot twice as fast while app launches will be faster than usual. This is thanks to the "extensive" changes that were made to the operating system’s core.

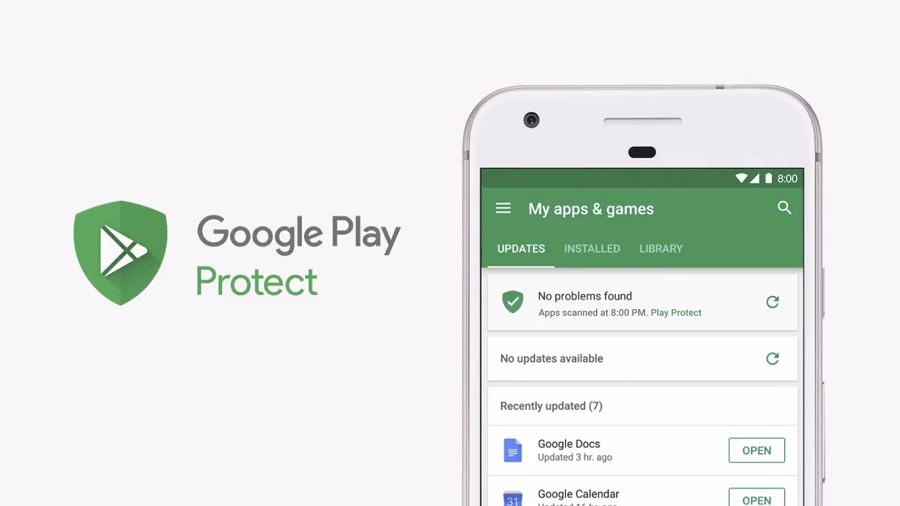

As for security, Google will be introducing Google Play Protect with Android O, a feature that scans apps installed on your phone to make sure that they’re not malicious. Seeing as Google already scans apps on the Play Store, this feature acts as an additional layer of security for your Android device.

On the Fluid experiences side, Android O will now come with an iOS-like app badging feature that highlights apps that contains notifications with a small dot located on the top-right corner of the app icon.

By long-pressing on the app, you’ll be able to see the amount of notifications that it has received.

Also coming to Android O is the ability to copy a long string of text by just double tapping on them. This means you’ll no longer have to drag handles around to highlight a location’s address.

Does Android O sound interesting to you? If so, then you might be interested to know that the Beta version of Android O is now available. Those interested in trying out the new OS while also owning an eligible Pixel or Nexus device can opt into the Beta by registering here.

Google Lens

At the I/O Keynote, Google made mention of a number of new features that are built using the company’s knowledge of artificial intelligence and machine learning. Google Lens is one such feature.

Essentially an improved version of Google Goggles, Google Lens is a feature that is integrated into Google Assistant and Google Photos. With it, the camera would be able to "understand what you see".

From the onstage demo of Google Lens, the system appears to be capable of recognizing the item it is focusing on.

Another demo showcases the fact that Google Lens can also pull up information about a certain location if your device’s camera was to focus on it.

Finally, Google Lens can also save and apply information to your Android device as well, as an onstage demo showcases a man focusing on a router’s network name and password, which was then immediately applied on the phone.

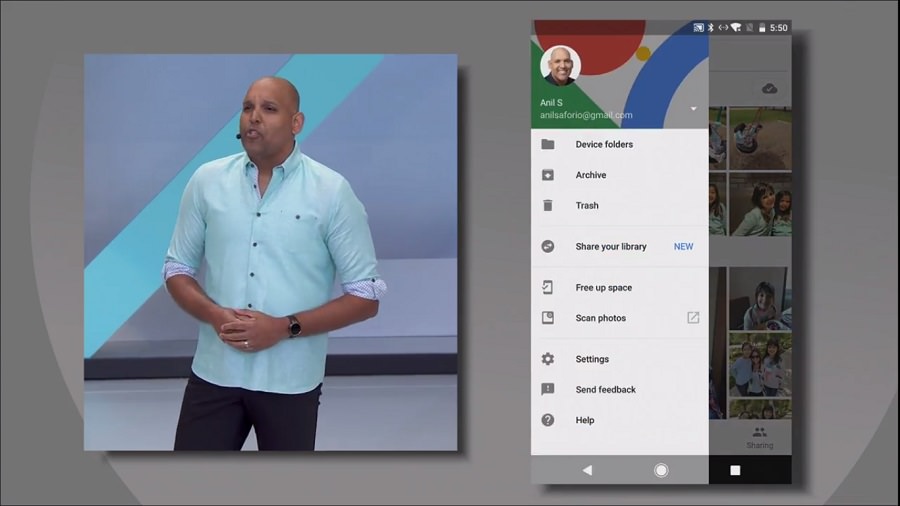

Google Photos

Since I’m on the subject of photography, let’s talk about Google Photos as it appears that Google is adding even more machine learning-based features into the platform, turning it into a pseudo-social network service of sorts.

With the updated Google Photos, the app will now come with a feature called Suggested Sharing. By utilising facial recognition technology, Google Photos can now identify the people that are in the picture and suggest that you share those pictures with them.

Alongside Suggestion Sharing, Google Photos will also come with an option called Shared Libraries.

It lets you share some or all of your photos with a specific set of people.

Lastly, you’ll be able to use the photos stored in Google Photos to create physical photo books thanks to the Photo Books. Once the update for Google Photos goes live,

- App will be able to identify 40 of your best photos and turn them into a physical photo book created by Google.

- The photo books are 20 pages.

- Comes in softcover variant (9.99 USD) or hardcover variant (19.99 USD).

- Additional pages cost 0.35 USD per page for softcover books or 0.65 USD per page for hardcover books.

Photo Books are now available for the desktop version of Google Photos. iOS and Android versions of Google Photos will get this feature next week.

Google for Jobs

Google’s search engine has been used for information, travel and so much more. In the near future, the search engine can also help you look for a job.

Developed in partnership with Linkedin, Monster, CareerBuilder, Glassdoor and Facebook, Google will be launching Google for Jobs, a search engine that looks for available jobs around your area.

The system uses a combination of Google search, machine learning, job boards, staffing agencies and applicant tracking systems in order to find work near your area.

As Google for Jobs doesn’t discriminate between entry level jobs or professional careers, anyone can use the system to find themselves a new place of employment with it. This feature will first be made available in the U.S. before being rolled out to other countries.

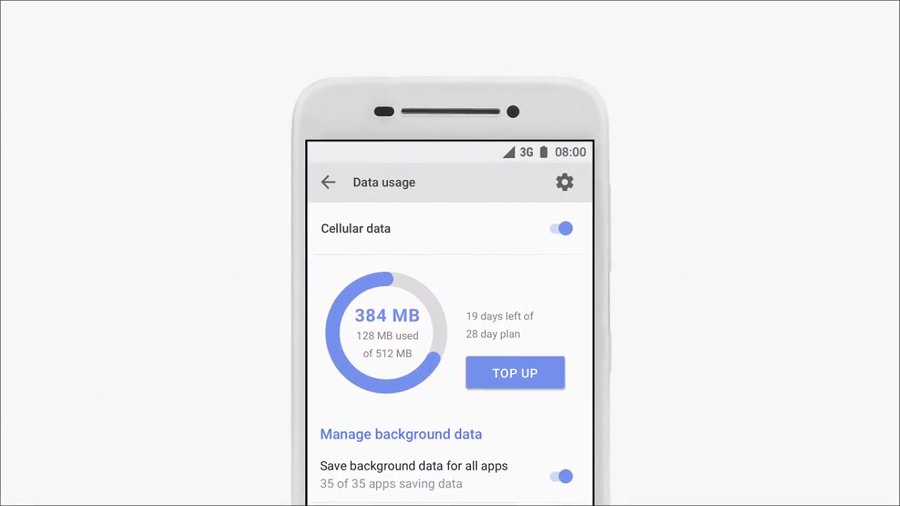

Android Go

Simply put, Android Go is a lightweight version of the Android operating system. Designed to be capable of running on devices with just 1GB of memory on them, Android Go is a version of Android that is tailored towards the emerging markets around the world.

In order to make Android Go lightweight, Google has designed certain apps on the OS to use "less memory, storage space and mobile data".

Examples of these apps include Gboard, YouTube Go and Chrome.

On top of being lightweight, some of the apps on Android Go are designed with the potential markets in mind. For example, Gboard will support transliteration, allowing users to type in phonetic spelling of words in other languages, which the software would then convert into the native character.

This feature will prove to be particularly useful for countries such as India and South America.

Google Daydream and Tango

It appears that the rumours are indeed true. There will be standalone Daydream VR headsets available in the market this year.

Developed in partnership with Qualcomm, HTC Vive and Lenovo, these Daydream VR headsets will be capable of running independently, meaning you won’t need to plug a phone into the headset to make it work.

On top of running on the Daydream platform, these standalone VR headsets will also utilise WorldSense, a positional tracking system that is based on Tango’s 3D mapping technology. This allows the headset to operate without the need for sensors.

Rounding out the Daydream and Tango announcements, Google has revealed that the recently released Samsung Galaxy S8 and S8+ will be receiving Daydream VR support in the Summer.

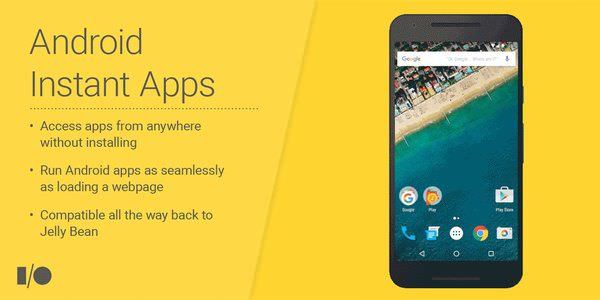

Instant Apps

Remember Instant Apps? After a year’s worth of testing, the feature is now available for all developers to use.

For those who don’t know what Instant Apps is, Instant Apps is Google’s method of breaking native apps into smaller packets that can be run instantaneously without requiring the corresponding app to be installed on the device first.

Those who are interested in leveraging this feature can opt to do so here.

Kotlin is now officially supported by Android

Rounding up the announcements that were made during the I/O Keynote, Google announced that Kotlin, the new programming language developed by JetBrains, will now be officially supported on the Android platform.

Starting from Android Studio 3.0, Google will now include Kotlin tools as part of the IDE. In addition to that, both JetBrains and Google have pledged to support the language from this point forward.

For developers who are worried that Kotlin would replace both Java and C++, Google has ensured that Kotlin is just an addition and not a replacement for both pre-existing languages, so you’ll still be able to develop applications for Android using either of those languages.

Read Also: 100+ Must-Know Google Services and Tools to Boost Your Productivity