5 Best Web Scraping Tools to Extract Online Data

Web Scraping tools are specifically developed for extracting information from websites. They are also known as web harvesting tools or web data extraction tools. These tools are useful for anyone trying to collect some form of data from the Internet. Web Scraping is the new data entry technique that don’t require repetitive typing or copy-pasting.

These software look for new data manually or automatically, fetching the new or updated data and storing them for easy access. For example, one may collect info about products and their prices from Amazon using a scraping tool.

In this post, we’re listing the use cases of web scraping tools and the top 5 web scraping tools to collect information with zero codings.

39 Free Web Services & Tools to Monitor Website Downtime

An online portal of your business brings traffic, and the last thing we want is for the site... Read more

When to use Web Scraping Tools?

Web Scraping tools can be used for unlimited purposes in various scenarios, but we’re going to go with some common use cases that apply to general users.

1. Collect data for market research

Web scraping tools can help keep you abreast of where your company or industry is heading in the next six months, serving as a powerful tool for market research.

The tools can fetch data from multiple data analytics providers and market research firms and consolidate them into one spot for easy reference and analysis.

2. Extract contact information

These tools can also be used to extract data such as emails and phone numbers from various websites, making it possible to have a list of suppliers, manufacturers, and other persons of interest to your business or company, alongside their respective contact addresses.

3. Download solutions from StackOverflow

Using a web scraping tool, one can also download solutions for offline reading or storage by collecting data from multiple sites (including StackOverflow and more Q&A websites).

This reduces dependence on active Internet connections as the resources are readily available despite the availability of Internet access.

4. Look for jobs or candidates

For personnel who are actively looking for more candidates to join their team or for job seekers who are looking for a particular role or job vacancy.

These tools also work great to effortlessly fetch data based on different applied filters and to retrieve data effectively without manual searches.

5. Track prices from multiple markets

If you are into online shopping and love actively tracking the prices of products you are looking for across multiple markets and online stores, then you need a web scraping tool.

Examples of great Web Scraping Tools

Let’s look at some of the best web scraping tools available. Some of them are free, and some of them have trial periods and premium plans. Do look into the details before you subscribe to anyone for your needs.

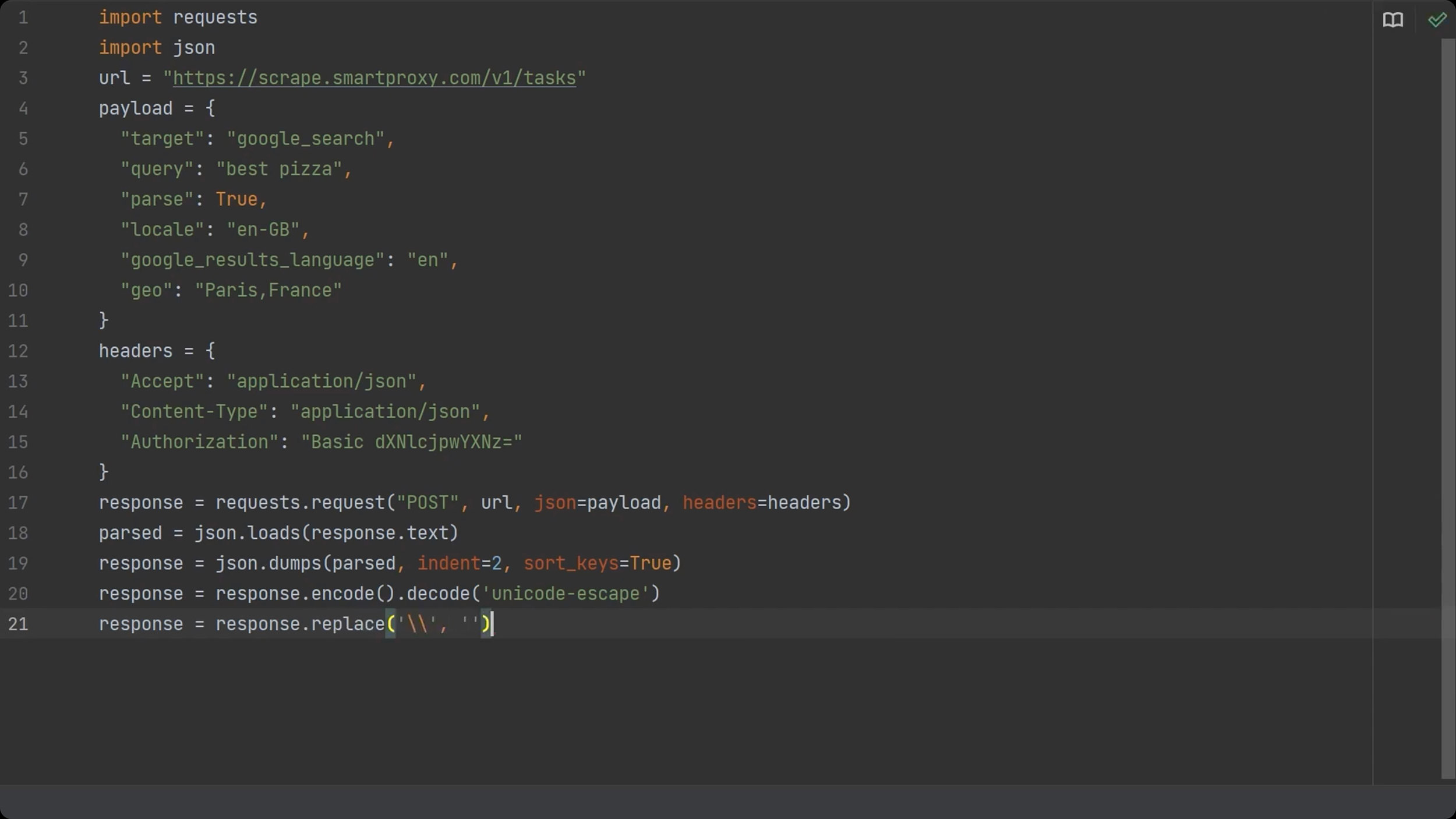

1. Smartproxy SERP Scraping API

Web scraping Google search results pages can be a real headache without the proper setup. Smartproxy’s SERP scraping API is a great solution for that. This SERP API combines a huge proxy network, web scraper, and data parser.

It’s a full-stack solution that lets you get structured data from major search engines by sending a single, 100% successful API request.

You can target any country, state, or city and get raw HTML results or parsed JSON results. Whether it’s checking keyword rankings and tracking other SEO metrics in real-time, retrieving paid and organic data or monitoring prices, Smartproxy’s search engine proxies cover it all.

You can get them for $100/month + VAT.

2. Sitechecker

Sitechcker offers a cloud-based website crawler that crawls your site in real-time and provides a technical SEO analysis. On average, the tool crawls up to 300 pages in 2 minutes, scanning all internal and external links, and gives you a comprehensive report right on your dashboard.

You can customize the crawler rules and filters with flexible settings according to your requirements and get a reliable website score that tells you about the health of your site.

Additionally, it’ll notify you through email about all the issues on your site, and you can also collaborate with your team members and contractors by sending a shareable link to the project.

3. Oxylabs Scraper APIs

Oxylabs’ Scraper APIs can extract public web data from even the most complex pages. It is best for large-scale web scraping operations. There are four Scraper APIs: SERP Scraper API, E-Commerce Scraper API, Real Estate Scraper API, and Web Scraper API.

Each Scraper API is specifically built for different targets to improve overall performance and user experience. Starting at $99/month. All Scraper APIs guarantee the following:

- Paying only per successful results.

- Easy access to localized content.

- Effortless scaling for your growing needs.

- 102M+ proxy pool.

- Data delivery to your cloud storage bucket (AWS S3 or GCS).

- Bypass geo-restrictions effortlessly with noticeably fewer CAPTCHAs or IP blocks.

- 24/7 support via live chat and email 7-day free trial with no commitment.

- No credit card is required.

Pricing models: Free: 5K pages, 5 results/s, Starter Plan: $99/month – 29K pages, 15 results/s, Business Plan: $399/month – 160K pages, 50 results/s, Corporate Plan: $999/month – 526K pages, 100 results/s.

4. Scraper API

Scraper API is designed to simplify web scraping. This proxy API tool is capable of managing proxies, web browsers & CAPTCHAs.

It supports popular programming languages such as Bash, Node, Python, Ruby, Java, and PHP. Scraper API has many features; some of the main ones are:

It is fully customizable (request type, request headers, headless browser, IP geolocation).

- IP rotation.

- Over 40 million IPs.

- Capable of JavaScript Rendering.

- Unlimited bandwidth with speeds up to 100Mb/s.

- More than 12 geolocations, and

- Easy to integrate.

Pricing models: Scraper API offer 4 plans – Hobby ($29/month), Startup ($99/month), Business ($249/month) and Enterprise.

5. Scrapingdog

Scrapingdog claims to have one of the fastest proxy API for web data scraping. With more than 40 million IPs available, the tool sends each request through a new IP so your scrapping is not blocked or prevented.

Moreover, the tool uses headless Chrome that enables users to scrape websites that render data in JavaScript. You can also prepare a dedicated script to scrape data from a particular website.

- Highly scaleable web scrapper

- Rotating proxies and headless Chrome ensure seamless data collection

- Additional APIs for LinkedIn and Google search

- Easy-to-use no code functionality

- Screenshot API for full or partial screenshots of data

Pricing models: Free: for first 1000 APIs, Lite: $30/month, Standard: $90/month, Pro: $200/month, and Enterprise: $500+/month.

Bonus: A few more…

HipSocial Web Scraper

HipSocial lets you scrap the web for interesting content so you easily post on social media. You can extract data from targeted sites and post them directly from the tool through integrated popular social media platforms.

The tool features NinjaSEO Bot – a Chrome extension bot – that enables you to scrape large volumes of data without any programming required. Apart from the textual content, you can scrape images relevant to your brand or your client’s.

HipSocial also offers social listening feature to measure the performance of your social media communication activities as well as a social media analytics tool to gauge what your followers are interested in.

HipSocial offers a ‘one price for 50 apps pricing package’ starting from $14.99/ month (cloud) to $74.95/ month (Enterprise).

Import.io

Import.io offers a builder to form your own datasets by simply importing the data from a particular web page and exporting the data to CSV. You can easily scrape thousands of web pages in minutes without writing a single line of code and build 1000+ APIs based on your requirements.

Import.io uses cutting-edge technology to fetch millions of data every day, which businesses can avail of for small fees. Along with the web tool, it also offers free apps for Windows, macOS and Linux to build data extractors and crawlers, download data, and sync with the online account.

Dexi.io (formerly known as CloudScrape)

CloudScrape supports data collection from any website and requires no download, just like Webhose. It provides a browser-based editor to set up crawlers and extract data in real-time. You can save the collected data on cloud platforms like Google Drive and Box.net or export as CSV or JSON.

CloudScrape also supports anonymous data access by offering a set of proxy servers to hide your identity. CloudScrape stores your data on its servers for two weeks before archiving it. The web scraper offers 20 scraping hours for free and will cost $29 per month.

Zyte

Zyte (formerly Scrapinghub) is a cloud-based data extraction tool that helps thousands of developers to fetch valuable data. Zyte uses Crawlera, a smart proxy rotator that supports bypassing bot counter-measures to crawl huge or bot-protected sites easily.

Zyte converts the entire web page into organized content. Its team of experts is available for help in case its crawl builder can’t work your requirements. Its basic free plan gives you access to 1 concurrent crawl, and its premium plan for $25 per month provides access to up to 4 parallel crawls.

ParseHub

ParseHub is built to crawl single and multiple websites with support for JavaScript, AJAX, sessions, cookies, and redirects. The application uses machine learning technology to recognize the most complicated documents on the web and generates the output file based on the required data format.

ParseHub, apart from the web app, is also available as a free desktop application for Windows, macOS, and Linux that offers a basic free plan that covers five crawl projects. This service offers a premium plan for $89 per month with support for 20 projects and 10,000 web pages per crawl.

ScrapingBot

ScrapingBot is a great web scraping API for web developers who need to scrape data from a URL. It works particularly well on product pages where it collects all you need (image, product title, product price, product description, stock, delivery costs, etc..). It is a great tool for those who need to collect commerce data or simply aggregate product data and keep it accurate.

ScrapingBot also offers various specialized APIs such as real estate, Google search results, or data collection on social networks (LinkedIn, TikTok, Instagram, Facebook, Twitter).

Features

- Headless chrome

- Response time

- Concurrent requests

- Allows for large bulk scraping needs.

Pricing

- Free to use with 100 credits every month. The first package at €39, €99, €299 then €699 per month.

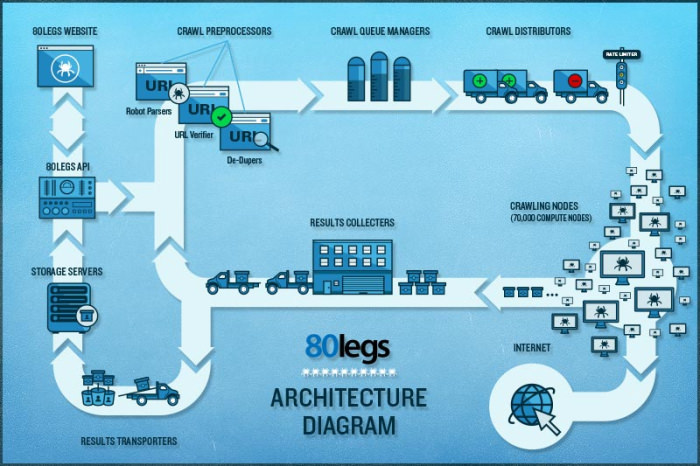

80legs

80legs is a powerful yet flexible web crawling tool that can be configured to your needs. It supports fetching huge amounts of data along with the option to download the extracted data instantly. The web scraper claims to crawl 600,000+ domains and is used by big players like MailChimp and PayPal.

Its ‘Datafiniti‘ lets you search the entire data quickly. 80legs provides high-performance web crawling that works rapidly and fetches required data in seconds. It offers a free plan for 10K URLs per crawl and can be upgraded to an intro plan for $29 per month for 100K URLs per crawl.

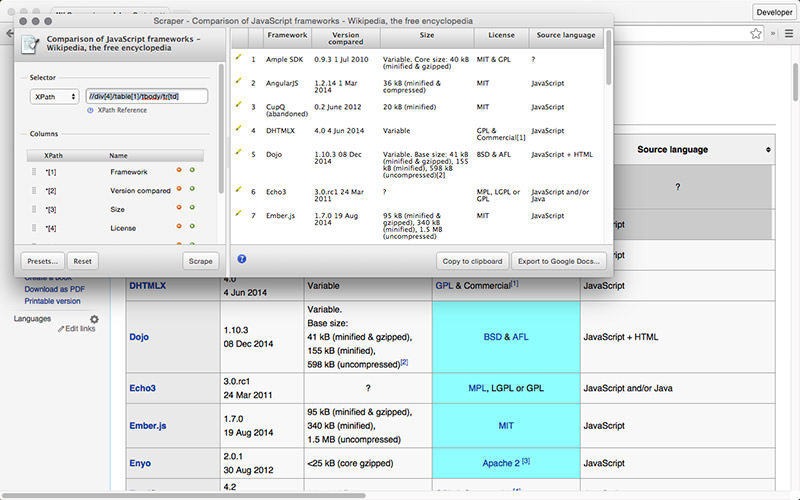

Scraper

Scraper is a Chrome extension with limited data extraction features, but it helps do online research and exporting data to Google Spreadsheets. This tool is intended for beginners and experts who can easily copy data to the clipboard or store it in spreadsheets using OAuth.

Scraper is a free tool that works right in your browser and auto-generates smaller XPaths for defining URLs to crawl. It doesn’t offer you the ease of automatic or bot crawling like Import, Webhose, and others, but it’s also a benefit for novices as you don’t need to tackle messy configuration.

Which is your favorite web scraping tool or add-on? What data do you wish to extract from the Internet? Do share your story with us using the comments section below.