8 Next-Generation User Interface That Are (Almost) Here

When we talk about user interface (UI) in computing, we’re referring to how a computer program or system represents itself to its user, usually via graphics, text and sound. We’re all familiar with the typical Windows and Apple operating system where we interact with icons on our desktop with our mouse cursors. Prior to that, we had the old-school text-based command-line prompt.

The shift from text to graphics was a major leap initiated by founder of Apple, Steve Jobs, with his hallmark Macintosh operating system in 1984. In recent years, we’ve also witnessed innovated UI that involved the use of touch (e.g. smartphones), voice (e.g. Siri) and even gestures (e.g. Microsoft Kinect). They’re, however, pretty much in their primary stages of development.

Nevertheless, they give us a clue as to how the next revolution of UI may be. Curious? Here are 8 key features of what next-generation UI may going to be like:

Recommended Reading: Cool Futuristic/Concept Gadgets That Really Inspire

1. Gesture Interfaces

The 2002 sci-fi movie, Minority Report portrayed a future where interactions with computer systems are primarily through the use of gestures. Wearing a pair of futuristic gloves, Tom Cruise, the protagonist, is seen performing various gestures with his hands to manipulate images, videos, datasheets on his computer system.

A decade ago, it might seem a little far-fetched to have such a user-interface where spatial motions are detected so seamlessly. Today, with the advent of motion-sensing devices like Wii Remote in 2006, Kinect and PlayStation Move in 2010, user interfaces of the future might just be heading in that direction.

In gesture recognition, the input comes in the form of hand or any other bodily motion to perform computing tasks, which to date are still input via device, touch screen or voice. The addition of the z-axis to our existing two-dimensional UI will undoubtedly improve the human-computer interaction experience. Just imagine how many more functions can be mapped to our body movements.

Well, here’s a demo video of g-speak, a prototype of the computer interface seen in Minority Report, designed by John Underkoffler who was actually the film’s science advisor. Watch how he navigate through thousands of photos in a 3D-plane through his hand gestures and collaborate with fellow ‘hand-gesturers’ on team tasks. Excited? Underkoffler believes that such UI will be commercially available within the next five years.

2. Brain-Computer Interface

Our brain generates all kinds of electrical signals with our thoughts, so much so that each specific thought has its own brainwave pattern. These unique electrical signals can be mapped to carry out specific commands so that thinking the thought can actually carry out the set command.

In a EPOC neuroheadset created by Tan Le, the co-founder and president of Emotiv Lifescience, users have to don a futuristic headset that detects their brainwaves generated by their thoughts.

As you can see from this demo video, the command executed by thought is pretty primitive (i.e. pulling the cube towards the user) and yet the detection seems to be facing some difficulties. It looks like this UI may take awhile to be adequately developed.

In any case, envision a (distant) future where one could operate computer systems with thoughts alone. From the concept of a ‘smart home’ where one could turn lights on or off without having to step out of your bed in the morning, to the idea of immersing yourself in an ultimate gaming experience that response to your mood (via brainwaves), the potential for such an awesome UI is practically limitless.

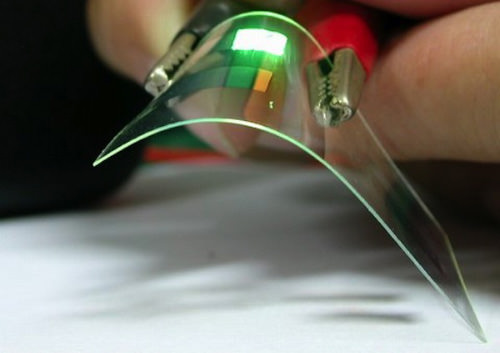

3. Flexible OLED display

If touchscreens on smartphones are rigid and still not responsive enough to your commands, then you might probably be first in line to try out flexible OLED (organic light-emitting diode) displays. The OLED is an organic semiconductor which can still display light even when rolled or stretched. Stick it on a plastic bendable substrate and you have a brand new and less rigid smartphone screen.

Furthermore, these new screens can be twisted, bent or folded to interact with the computing system within. Bend the phone to zoom in and out, twist a corner to turn the volume up, twist the other corner to turn it down, twist both sides to scroll through photos and more.

Such flexible UI enables us to naturally interact with the smartphone even when our hands are too preoccupied to use touchscreen. This could well be the answer to the sensitivity (or lack there of) of smartphone screens towards gloved fingers or when fingers are too big to reach the right buttons. With this UI, all you need to do is squeeze the phone with your palm to pick up a call.

4. Augmented Reality (AR)

We are already experiencing AR on some of our smartphone apps like Wikitude, but it is pretty much at its elementary stages of development. AR is getting the biggest boost in awareness via the upcoming Google’s Project Glass, a pair of wearable eyeglasses that allows one to see virtual extensions of reality that you can interact with. Here’s an awesome demo of what to expect.

AR can be on anything other than glasses, so long as the device is able to interact with a real-world environment in real-time. Picture a piece of see-through device which you can hold over objects, buildings and your surroundings to give you useful information. For example, when you come across a foreign signboard, you can look through the glass device to see them translated for your easy reading.

AR can also make use of your natural environment to create mobile user interfaces where you can interact with by projecting displays onto walls and even your own hands.

Check out how it is done with SixthSense, a prototype of a wearable gestural interface developed by MIT that utilizes AR.

5. Voice User Interface (VUI)

Since the ‘Put That There‘ video presentation by Chris Schmandt in 1979, voice recognition has yet to meet with a revolutionary kind of success. The most recent hype over VUI has got to be Siri, a personal assistant application which is incorporated into Apple’s iOS. It uses a natural language user interface for its voice recognition function to perform tasks exclusively on Apple devices.

However you also see it as the supporting act in other user interface technologies like Google Glass itself. Glass works basically like a smartphone, only you don’t have to hold it up and interact with it with your fingers. Instead it clings to you as eyewear and receives your commands via voice control.

The only thing that is lacking now in VUI is the reliability of recognizing what you say. Perfect that and it will be incorporated into user interfaces of the future. At the rate that smartphones capabilities are expanding and developing now, it’s just a matter of time before VUI takes centre stage as the primary form of human-computer interaction for any computing system.

6. Tangible User Interface (TUI)

Imagine having a computer system that fuses the physical environment with the digital realm to enable the recognition of real world objects. In Microsoft Pixelsense (formerly known as Surface), the interactive computing surface can recognize and identify objects that are placed onto the screen.

In Microsoft Surface 1.0, light from objects are reflected to multiple infrared cameras. This allows the system to capture and react to the items placed on the screen.

In an advanced version of the technology (Samsung SUR40 with Microsoft PixelSense), the screen includes sensors, instead of cameras to detect what touches the screen. On this surface, you could create digital paintings with paintbrushes based on the input by the actual brushtip.

The system is also programmed to recognize sizes and shapes and to interact with embedded tags e.g. a tagged namecard placed on the screen will display the card’s information. Smartphones placed on the surfaces could trigger the system to display the images in the phone’s gallery onto the screen seamlessly.

7. Wearable Computer

As the name suggests, wearable computers are electronic devices which you can wear on you like an accessory or apparel. It can be a pair of gloves, eyeglasses, a watch or even a suit. The key feature of wearable UI is that it should keep your hands free and will not hinder your daily activities. In other words, it will serve as a secondary activity for you, as and when you wish to access it.

Think of it as having a watch that can work like a smartphone. Sony has already released an Android-powered SmartWatch earlier this year that can be paired with your Android phone via Bluetooth. It can provide notifications of new emails and tweets. As with all smartphones, you can download compatible apps into Sony SmartWatch for easy accessibility.

Expect more wearable UI in the near future as microchips bearing smart capabilities grow nano-smaller and be fitted into everyday wear.

8. Sensor Network User Interface (SNUI)

Here’s an example of a fluid UI where you have multiple compact tiles made up of color LCD screens, in-built accelerometers and IrDA infrared transceivers that are able to interact with one another when placed in close proximity. Let’s make this simple. It’s like Scrabble tiles that have screens which will change to reflect data when placed next to each other.

As you shall see in this demo video of Siftables, users can physically interact with the tiles by tilting, shaking, lifting and bumping it with other similar tiles. These tiles can serve as a highly interactive learning tool for young children who can receive immediate reactions to their actions.

SNUI is also great for simple puzzle games where gameplay includes shifting and rotating tiles to win. Then there’s also the ability to sort images physically by grouping these tiles together according to your preferences. It is a more crowd-enabled TUI; instead of one screen it’s made out of several smaller screens that interact with one another.

Most Highly-Anticipated UI?

As these UI become more intuitive and natural for the new generation of users, we are treated with a more immersive computing experience that will continually test our ability to digest the flood of knowledge they have to share. It will be overwhelming and, at times, exciting and it’s definitely something to look forward to in new technologies to come.

More!

Interested to see what the future has in store for us? Check out the links below.

- 5 Top Augmented Reality Apps For Education

- 5 Key Features To Expect In Future Smartphones

- Jaw-Dropping TED Videos You Should Not Miss

Which of these awesome UI are you most excited about? Or do you have any other ideas for UI of the next-gen? Share your thoughts here in the comments.