What Researchers Learned From Our Facebook Usage

Facebook is a treasure mine of data and has been a hot bed of studies on online social behavior. The social media giant even has a team of data analyst (aka the Data Science Team) looking at all the data we have put up on Facebook. Whether it is information on ourselves in the About section or a simple Like, Facebook knows all.

Now if this isn’t bordering creepy, I don’t know what is. It is also perhaps why users were outraged when it was announced that a recent study had manipulated their News Feeds to show that their moods can be affected, based on whether the posts are positive or negative.

One can’t help but think what else does Facebook know about us and what other studies were done with or without our knowledge. Well, we did some digging, and here are 8 studies researchers and Facebook have done that reveal things about ourselves.

5 Effects Social Networks Have On You

If you are active on social media sites like Facebook, Twitter, Instagram, etc, it's probably a way of... Read more

1. Facebook plays With Our Emotions

We will begin with the one that has been making headlines recently: 689,003 users were shown either primarily positive or negative posts for 1 week on January 2012. The results showed that our emotions can be influenced by what we see on our feed. Those who were exposed to more negative posts are more likely to come back with a negative status on their own, while those who were exposed to positive feeds will post positive statuses.

The problem however was not in the research, it was in how it was conducted – no one was informed nor had asked for their consent to be part of the experiment. As usual, Facebook and the researchers defended the study by stating that consent had been given via their Data Use Policy (which they know no one reads) which included being part of studies that Facebook wants to conduct on their users. Didn’t really matter that the term "Research" was added only in May 2012.

2. You are what you like

A study conducted in 2013 by the University of Cambridge revealed that the things we like on Facebook reveal our personality. That doesn’t sound like groundbreaking results, it’s pretty obvious that if my favorite book is the Bible, then there is a high possibility that I am a Christian. But this study goes beyond that. Apparently, your likes reveal your ethnicity, sex, age, political leanings, sexual orientation, IQ level, emotional state of being and whether you abuse drug substances (whoa).

At least this time, the 58,000 users volunteered to have their Facebook likes examined, with an app the researchers came up with. After looking at all your likes, the researchers can deduce who you are from there. The method was reported to have a high accuracy rate, although there were some odd findings, like how if you are smart, you prefer curly fries and Morgan Freeman’s voice. Have a look at the study here.

3. Facebook knows your close friends

Unless you actively stalk someone via Facebook, it’s probably safe to say that you prefer to indulge yourself in liking or commenting on the statuses (and hence, lives) of your close friends. This is the basis of this study conducted by San Diego’s University of California which involved 789 Facebook users.

The participants were asked to list the people they were closest to. The researchers then examined the Facebook activity of the participants and were able to accurately predict who the participants’ closest friends were, 84% of the time. This may not seem impressive but it definitely disproves the notion that your IRL relationships are separate from your online relationships.

(Image source: PLUS One)

4. We Actively Censor Ourselves

If you have ever wondered if Facebook could see what you typed into your status box but didn’t publish, then the politically correct answer is No. And yet, Facebook’s data scientist Adam Kramer and Facebook intern Sauvik Das tracked the unpublished status updates of 3.9 million users over the course of 17 days in 2012 and came to the conclusion that we actively self-censor when we are on Facebook.

By matching their findings with user demographics, social circles and ideological leanings, the researchers found that people will censor opposing views unless they know their views can be tolerated by their social circles.

But if they don’t track our unpublished statuses, and the content of the statuses as well as the keystrokes are not studied, just what metrics do they base this study on? Or are they being less than truthful when it comes to the methodology used in the study?

(Image source: Ars Technica)

5. Teens May Be Leaving Facebook

So media outlets were having a field day with that headline about teenagers abandoning the 10-year old social network. Azzief Khaliq took a look at the situation in his own post here and where he argued that in truth, teens are fickle-minded and teens may hate Facebook but weren’t actually leaving it by the droves.

Then again, reports later resurfaced about teens making the move from Facebook to messaging apps. Digital agency iStrategyLabs also published that there are now 3 million fewer teens aged 13 to 17 on Facebook as compared to 2011 but as this article pointed out, the data may be flawed because these teens could be lying about their age. Perhaps it could just be due to the simple fact that these teens basically outgrew their teen status.

(Image source: iStrategyLabs)

Why Are Teens Leaving Facebook? A Look at Social Media Trends

The topic of teens moving away from Facebook has been a hot discussion point for years. Reports on... Read more

6. Facebook Will Die In 3 Years

All the hullabaloo about teens leaving Facebook led to reports about teens deeming Facebook as "dead to them". Then, there is that study predicting the rapid decline of the social network giant and eventual death within 3 years. The study conducted by a couple of Princeton researchers modeled their research on the social media network off epidemiological models – the study of infectious diseases, which spreads quickly, and dies, suddenly.

The researchers tested this model on MySpace then made the prediction that Facebook’s peak user base will decline by 80% by 2017, like how MySpace lost its users. As much as we all love Facebook to die (guilty!), a Slate article tells us why this study is flawed (hint: the eclectic combination of aerospace engineering researchers studying about online social behavior in social networks based on infectious disease models was pretty hard to miss).

About the only useful thing this research revealed is that everyone loves a dying Facebook story.

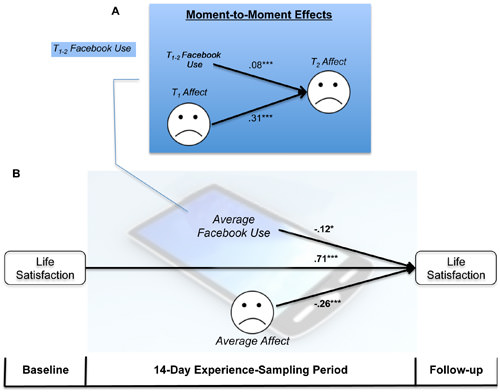

7. Facebook Is Depressing

Well before this negative statuses begets negative responses, a 2013 study by the University of Michigan has already come to the conclusion that Facebook makes people depressed. Researchers texted 82 college participants 5 times a day for 2 weeks to garner their emotional levels whilst using the social network. The study found that the more often you use Facebook, the less happy you are, a finding that other similar research have previously revealed.

An extended study by Michigan’s researcher Ethan Kross however revealed that this may be because we are using Facebook wrong: you shouldn’t just read your Facebook feed like a spectator, either actively participate and engage with your friends socially, or get yourself off the social network.

(Image source: PLOS One)

8. Facebook Motivates Voting

Did you know that Facebook will be releasing “I’m a Voter” buttons across the world? India was the first to get the button during its elections which saw Narendra Modi as its new prime minister. The reasoning behind this is that the Voter button will generate more voter turnout. In fact, a 2012 study by the San Diego’s University of California found something similar during the 2010 US congressional elections.

On election day, Facebook released a banner which reminded users that it is indeed election day, accompanied by polling info, as well as a button that lets them know which of their friends have voted. A direct effect from the exposure spurred 60,000 more people, who would otherwise would not have voted, to vote. The research also suggested that 280,000 other users were indirectly influenced to vote, a 0.6% hike from the previous election in 2008.

The graphics were non-partisan, but one can’t help but consider this scary thought: Can Facebook eventually influence who we vote for?

(Image source: Nature)